A purported leak of 2,500 pages of internal documentation from Google sheds light on how Search, the most powerful arbiter of the internet, operates.

The leaked documents touch on topics like what kind of data Google collects and uses, which sites Google elevates for sensitive topics like elections, how Google handles small websites, and more. Some information in the documents appears to be in conflict with public statements by Google representatives, according to Fishkin and King.

Can’t wait for selfhosted web search to become better.

You mean hosting your own crawler/indexer? That doesn’t really sound like a thing you could do cost-effectively.

No problem we crowdsource the crawling torrent style.

We outsourced that to google for reasonnable performance reason. But they shit the bed so now there’s no choice but to do it ourselves.

ooh that might be an interesting app to run on veilid

What is that and how does it apply ?

Source: https://en.wikipedia.org/wiki/Veilid

Surprisingly, it’s very doable, requires basic technical knowledge and relatively minimal computing resources (runs in the background on your computer).

https://yacy.net/ Github

I have tampermonkey script that sends yacy to crawl any websites that I visit, and it’s keeping up relatively good index for personal use of the visited websites. Combine yacy with ~300gb of Kiwix databases, add searxng as a frontend and you have pretty strong self hosted search engine.

Of course you need to supplement your searches from other search engines, as yacy does not crawl the whole web, just what you tell it to.

I encourage anyone who’s even slightly interested on this stuff to try Yacy, it’s ancient piece of software, but it still works very well and is not an abandoned project yet!

–

I personally use Yacy mostly on private mode, but it does have the distributed network there as well.

Yeah, I guess the P2P component sort of solves part of the issue I was imagining by distributing indexes and crawling. I was thinking that people were trying to run all of Google on a raspberry pi at home.

This is interesting, have you had it index reddit? I’m just wondering how much storage space the database takes up.

Hi!

Great question! I don’t crawl reddit, but this applies to other large sites as well. reddit themselves they have at this very moment banned the ip range where I host my Yacy at (Hetzner). I just looked up from my index that I do have 257k pages indexed from reddit through teddit I used to run, this is from before reddit api-enshittification, going to delete those right now.

And the way how the crawling is done is you define crawling depth, which limits how much content is crawled from the site.

… etc.

I have my tampermonkey scripts set to only crawling depth of 1 at the moment (Just set them to 2 actually, kinda curious how much more I will be crawling), I’ve manually crawled some local news sites as a curiosity at the beginning. And my database is currently relatively small, only around ~86.38 gigabytes according to Yacy. This stores aproximately 2.6 million documents in Yacy’s Solr.

–

Yacy has tons of options for crawling, so you can customize how much it crawls and even filter out overly large sites with maximum number of documents set when you send Yacy there.

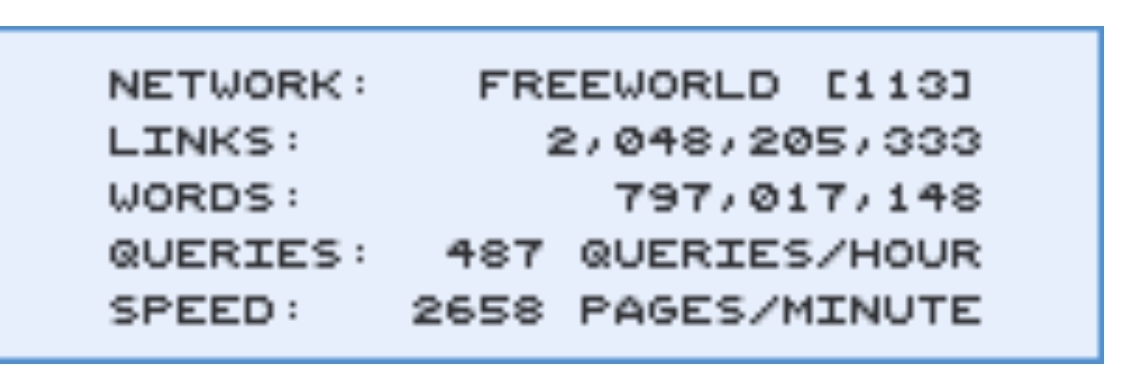

Large picture of Yacy's interface for starting a crawl.

–

The tampermonkey script I’ve been talking about in these posts, it’s very simple script: https://github.com/JeremyRand/YaCyIndexerGreasemonkey

Hit me up if you guys have more questions! I’m by no means an expert on Yacy, but I will do my best to answer.

Right!

Ars

Federated bookmarks?

Federated directories. We’re going back to Yahoo like it’s 1995

Webrings!!!

<under_construction.gif>

Uh…I know we’re all just having fun here, but I need to be part of a webring again. If anyone is more than joking, I kinda need to know about it. Thanks.

there are tons of webring still going these days!

Seriously? Cool. I’m going to go do some research then. And maybe entirely change the purpose of my blog, just to fit into one…

can you share a link to it if you’re comfortable with that

I loved Geocities!

Neocities is trying to be a modern reincarnation https://neocities.org/

I mistook that as neopets

Yahoo patiently plotting its return from Japan.

I’m so ready for something like this. I’ve cleaned up my bookmarks and been waiting for alternatives to search engines.

SearxNG

You could use Common Crawl, it’s run by a non profit

https://en.wikipedia.org/wiki/Common_Crawl

Look up the yacy repo in github

How is that even supposed to work? These search engines need per definition massive databanks to search through. Either you need your own crawler and indexer which is more than just inefficient, or you are limited to a relatively short list of curated static results.

If they’re taking tips from Google, why would they get better?

Google actually was good, so there’s probably some good information in this documentation. If nothing else we can perhaps figure out what “went wrong.”

Edit: I’ve been reading the blog post that appears to be the main person the leak was shared with and there’s a lot of in-depth analysis being done there, but I’m not seeing a link to the actual documents. This is a huge article, though, I might be overlooking it.

That was an interesting read. Thanks for linking to it.

What are the current contenders?

Ars Technica this week: Bing outage shows just how little competition Google search really has

The referenced search engine comparison by Rohan “Seirdy” Kumar

can’t emphasise too much that this piece is a very necessary read for anyone who wants to know about search; not just because it says good things about us, but because of the depth of research which has been put in here. Most times you encounter an article about indexes they are just taking whatever a (meta)search engine says about themselves, not even looking at privacy policies for “relationships with microsoft” etc. or doing any comparative work.

I’ve been using Kagi and really like it so far. It’s not good for local stuff, but afaik only Google and Bing have the resources and userbase for things like maps and reviews. It’s designed to be an ad-free ‘premium’ search engine and only earns revenue from users paying for membership.

OpenStreetMap’s platform is the only real way to compete against Google and Apple and it’s why Microsoft even though it has Bing Maps, has licenced to them resources like satellite imagery for mapping. It’s awesome in bigger population areas but there’s still a lot to map in rural places outside the EU.

Review is harder. Right now the leading open platform afaik is Open Reviews (aka Mangrove Reviews) which has tie-ins to OSM projects like MapComplete. OsmAnd and OrganicMaps have open tickets to hook into that ecosystem. You’re right about the userbase problem though, I think it (or a successor) needs AP federation to really take off. That being said there’s several active non-Google nonfree alternatives like Yelp and TripAdvisor as well as niche sites for things like camping, parks, and schools.

the only one I know that isn’t a proxy search is yacy

I was looking at it the other day unfortunatly its got quite poor results

That tracks

YaCy, Mwmbl, Alexandria, Stract, Marginalia to name a few.